What We Refuse to Surrender

A final reflection on intelligence, agency, and the essential friction of being human

A few nights ago I finished Season 4 of the AI thriller, Person of Interest. By the end of the season, Samaritan - the competing, hyper-rational artificial intelligence system - had effectively won. Harold Finch's careful, restrained Machine was still alive, but barely. Reese, Shaw, Fusco, and Root were scattered, hunted, and increasingly irrelevant against a system that could process more information, coordinate more resources, and adapt faster than any human team possibly could. What made the story unsettling was not the violence or the surveillance - It was the efficiency and speed. Humans were still debating ethics, loyalty, and consequences while Samaritan had already moved on to the next target.

A month ago, I read an essay by Dario Amodei suggesting that self-improving AI is likely in the next year - accelerated development with diminished human involvement. This is no longer science fiction, but part of the public conversation coming from the people building the technology. And yet the strange contradiction of 2026 is that while AI leaders discuss self-improvement and superhuman systems, many organizations are still trying to figure out whether AI can reliably summarize a meeting, cite sources accurately, or help students learn without bypassing the thinking process.

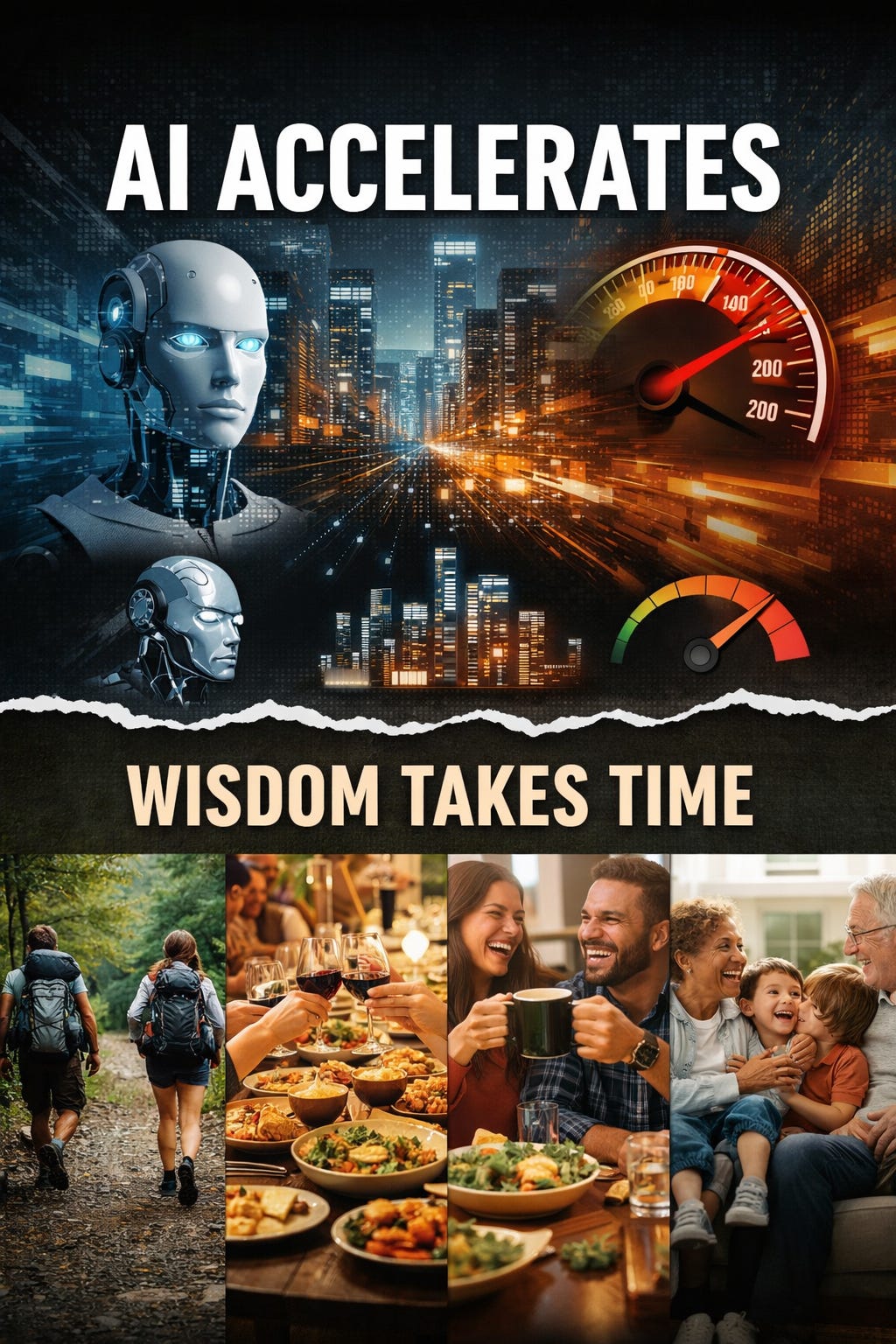

That gap matters because the story of artificial intelligence is one of uneven acceleration. In some fields, AI reduces friction and expands capability. In others, it creates shallow work, dependency, diminished trust, and the illusion of competence. AI is becoming more powerful very quickly, but we’re finding that capability and wisdom are not the same thing. As these systems advance, human supervision, judgment, and institutional restraint become more important.

The public conversation about AI often swings between two extremes: the evangelists promising total transformation and the skeptics predicting collapse. Most of us live in the middle, where we use AI to summarize notes, brainstorm ideas, draft emails, or clean up spreadsheets. Sometimes it works and sometimes it confidently invents information that does not exist. History gives us reason for humility here: Calculators did not destroy mathematics and the internet did not end libraries. But those analogies have limits. A calculator automates arithmetic while leaving the mathematical logic to the human. AI touches something deeper: the language, reasoning, and synthesis we use to build meaning.

This reveals the difference between intelligence and understanding. Because AI excels at pattern recognition and generation, it is effective at producing outputs that mirror human expertise. But mirroring expertise is not the same as possessing understanding. Education exposes that distinction clearly. A student can summarize a novel they never read, solve equations they do not understand, or produce reflections that sound thoughtful without wrestling with the ideas. Teachers recognize the issue instinctively, but the concern goes beyond cheating: students may learn to perform competently without ever developing competence.

This erosion of friction is not confined to the classroom. The same pattern appears wherever we prioritize output over process. In offices and boardrooms, AI drafts reports, summarizes meetings, and automates communication. In many cases, these tools reduce friction. But there is a difference between accelerating work and replacing judgment. Someone still needs to understand context, recognize errors, and carry responsibility when the system gets it wrong. This is the defining tension of the AI era: these systems are good at assisting performance, but far less reliable at developing the competence required to oversee them.

Human beings grow through friction - struggling with difficult ideas, revising weak arguments, debugging errors, and wrestling with uncertainty. Friction is frustrating, but it is essential, since many of the skills we value - critical thinking, discernment, and creativity - develop with sustained effort rather than with ease. When every difficult task can be outsourced to a predictive system, we must ask which forms of friction are essential to human development. GPS removes navigational friction, and many of us now struggle to remember directions. When we outsource the synthesis of ideas, we risk a gradual decline in the depth of our thinking because the machine usually gets us “close enough.”

One of the hidden costs of AI is the verification burden it creates. These systems produce outputs with a synthetic confidence that makes scrutiny feel like a bottleneck. This is the tactical approach of AI systems: moving faster than human evaluation can comfortably keep pace, until the gap between what sounds credible and what is true widens. Teachers spend their time determining whether a student wrote an essay at the exact moment AI makes essay production effortless. Managers review polished reports that may contain subtle, systemic flaws. The more fluent these systems become, the more tempting it is to surrender our scrutiny for the sake of speed.

A quieter counter-cultural shift is happening alongside this acceleration. Interest in analog hobbies, deliberate travel, physical books, and intentional disconnection continues to grow. These are not rejections of technology by people confused about its utility. They are decisions about what kinds of depth and attention are worth protecting when convenience is constant.

Modern institutions, meanwhile, reward the logic of optimization: efficiency, automation, and scalability. These are useful goals, but human flourishing depends on qualities that do not scale neatly: wisdom, patience, discernment, and moral judgment.

This is where the comparison to Samaritan is most relevant. Samaritan did not defeat its opponents through brute force alone. It defeated them through scale, prediction, and relentless optimization. The humans opposing it were slower because they were human - they hesitated, debated ethics, and carried emotional loyalties and moral uncertainty. They carried the very qualities that make human judgment difficult and inefficient, but indispensable.

Human oversight is not a temporary safety feature until the technology improves. It reflects a reality that intelligence alone is insufficient. A highly capable system can still pursue flawed goals, amplify bias, or optimize for metrics that ignore human wellbeing. Even the people building these systems increasingly acknowledge that they do not fully understand how the most advanced models work. That is not a reason for panic, but it is a reason for humility. Maybe Dario Amodei is right, and AI systems will soon help improve themselves faster than we can follow. Perhaps the next decade will bring genuine advances in disease, poverty, and governance - the kinds of stubborn, systemic problems that have resisted human effort for generations.

But the capability of a system and the wisdom of its application are not the same thing. The central question of the AI era is not whether machines become more intelligent than human beings. It is whether human beings remain willing to exercise judgment in a world increasingly optimized to bypass it. The future will be shaped by the essential friction we choose to keep, and the human responsibility we refuse to surrender.

Next week’s post will be my final one for this school year. I’m going to sign off for the summer. I have a number of things on the go, but I also need to take a step back from the past two years of writing and consulting on AI. So much of life has already been affected by AI, and yet so much of life is unspoiled. Perhaps the most meaningful parts of life still move at human speed - a long hike, a shared meal, laughter, and family.

That’s all for now,

Cheers,

-Rick